After two years of development and some deliberation, AMD decided that there is no business case for running CUDA applications on AMD GPUs. One of the terms of my contract with AMD was that if AMD did not find it fit for further development, I could release it. Which brings us to today.

All I need to know about AMD is this:

Whenever amdgpu.ko is insmoded, the display (or the system itself in some cases) is unstable. When it works great now, one day it won’t and the machine will inexplicably start crashing randomly or displaying garbage after an update.

This has held true for years for me on many machines I’ve installed Linux on, and it still does: not a week ago, I updated a laptop with a Renoir chipset in it (RX Vega 6) that had been stable for years, and now the display gets corrupted whenever I switch VT. Because amdgpu…

Not bashing on AMD or Nvidia. This has just been my reality. As a result, whenever I have a choice, I go with Intel graphics because it never causes me as much of a headache.

Intel graphics has issues of its own, especially on laptops. Whatever power saving features Intel managed to add to their Windows drivers need to be turned off on Linux or it’ll cause weird issues or even physical damage (in one particularly nasty bug where the Intel driver made the backlight strobe).

In my experience, amdgpu has been fine, though I’ve only used it on an older laptop. This only ever caused problems for me on Ubuntu (with its old kernels), things became a lot more stable when I switched it over to Manjaro.

If you can consistently get the display to corrupt, you should report the bug with your distro. There’s a good chance this is a regression of some kind that can be patched.

Now let’s get this working on Nvidia hardware :P

as pointless as it sounds it would be a great way to test the system and call alternative implementations of each proprietary Nvidia library. It would also be great for debugging and development to provide an API for switching implementations at runtime.

Those benchmarks are impressive indeed. I certainly care much more about having extra VRAM than a little extra speed, comparing the 7900 XT or 7900 XTX to the RTX 4080.

I’d love to see some benchmarks for various LLMs and image generators.

Now we’ll see if Nvidia drops CUDA immediately, or waits until next quarter.

Do LLM or that AI image stuff run on CUDA?

Cuda is required to be able to interface with Nvidia GPUs. AI stuff almost always requires GPUs for the best performance.

Yes, llama.cpp and derivates, stable diffusion, they also run on ROCm. LLM fine-tuning is CUDA as well, ROCm implementations not so much for this, but coming along.

Nearly all such software support CUDA, (which up to now was Nvidia only) and some also support AMD through ROCm, DirectML, ONNX, or some other means, but CUDA is most common. This will open up more of those to users with AMD hardware.

They are usually released for CUDA first, and if the projects got popular enough, someone will come in and port them to other platforms, which can take a while especially for rocm. Apple m series ports usually appear first before rocm, that’s show how much the devs community dislike working with rocm with famous examples such as geohot throwing the towel after working with rocm for a while.

ROCm DKMS modules

Huh? What are these?

Since when does ROCm require kernel modules? DRI exists?

Technically always has, ROCm comes with a “backported” amdgpu module and that’s the one they supposedly test/officially validate with. It mostly exists for the ancient kernels shipped with old long-time support distros.

Of course, ROCM being ROCM, nobody is running an officially supported configuration anyway and the thing is never going to work to an suitably acceptable level. This won’t change that, since it’s still built on top of it.

This is the best summary I could come up with:

While there have been efforts by AMD over the years to make it easier to port codebases targeting NVIDIA’s CUDA API to run atop HIP/ROCm, it still requires work on the part of developers.

The tooling has improved such as with HIPIFY to help in auto-generating but it isn’t any simple, instant, and guaranteed solution – especially if striving for optimal performance.

In practice for many real-world workloads, it’s a solution for end-users to run CUDA-enabled software without any developer intervention.

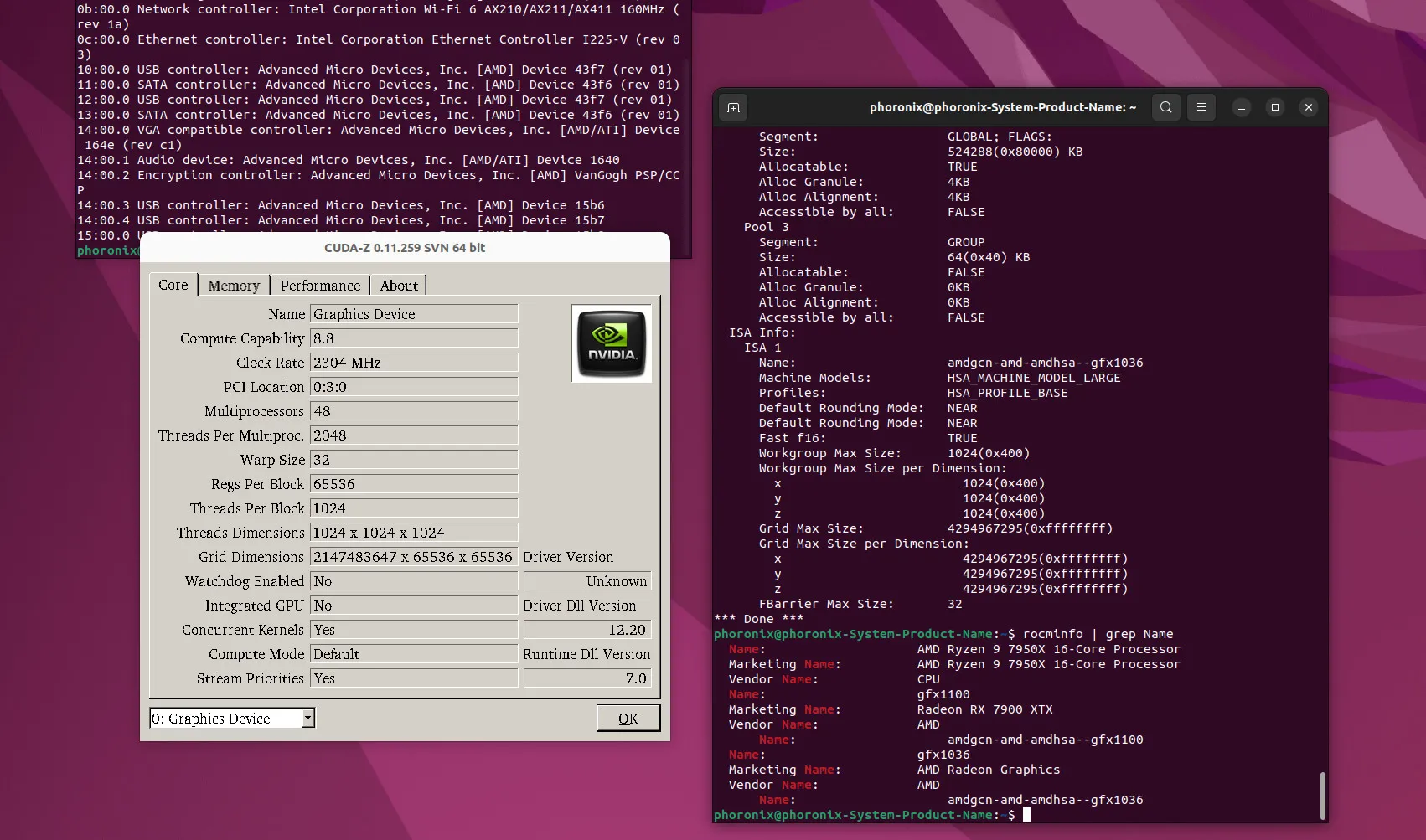

Here is more information on this “skunkworks” project that is now available as open-source along with some of my own testing and performance benchmarks of this CUDA implementation built for Radeon GPUs.

For reasons unknown to me, AMD decided this year to discontinue funding the effort and not release it as any software product.

Andrzej Janik reached out and provided access to the new ZLUDA implementation for AMD ROCm to allow me to test it out and benchmark it in advance of today’s planned public announcement.

The original article contains 617 words, the summary contains 167 words. Saved 73%. I’m a bot and I’m open source!

For reasons unknown to me, AMD decided this year to discontinue funding the effort

Presumably they did not want to see Cuda becoming the final de-facto standard that everyone uses. It nearly did at one point a couple of years ago, despite the lack of openness and lack of AMD hardware support.

i heavily rely on CUDA for many things i do on my personal computer. If this establishes itself as a reliable method to use all the funky CUDA stuff on AMD cards, my next card will 100% be AMD.

If there were a drop in equivalent to CUDA with AMD, I’d have several AMD cards, right now.

They stopped funding the replacement, not CUDA.

By funding an API-compatible product, they are giving CUDA legitimacy as a common API. I can absolutely understand AMD not wanting a competitors invention and walled-off product to be anything resembling an industry standard.

It already has legitimacy. It’s their hardware that doesn’t, despite the decent raw flops and high memory.

That is contradicted by the headline. This easy confusion between CUDA (the API) and CUDA (the proprietary software package that is one implementation of it) illustrates the problem with CUDA.

ZLUDA seems to be an effort to fix that problem, but I don’t know what it’s chances of success might be.

It’s just a bad headline. They funded a CUDA replacement, then stopped funding it, as a result of which the project was released as open source.

i heavily rely on CUDA for many things i do on my personal computer. If this establishes itself as a reliable method to use all the funky CUDA stuff on AMD cards, my next card will 100% be AMD.